Unlocking the FCC data at Hack for Western Mass

A team of civic-minded hackers in Western Massachusetts have built a tool to make it easier to access documents about the FCC proceeding on regulating the prison telephone industry.

by Peter Wagner, June 3, 2013

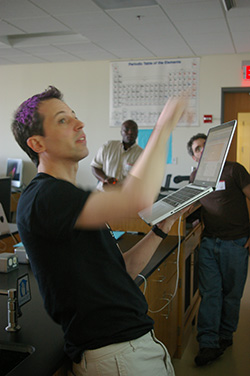

Challenge representative Peter Wagner of the Prison Policy Initiative explaining how the FCC organizes data by separate “proceedings”. (Photo: Stephen Brewer)

Sondra Morin, Gyepi Sam, and Al Nutile exploring the name space of the FCC’s database structure. (Photo: Stephen Brewer)

Al Nutile (center) sharing an idea with Gyepi Sam (left) and Peter Wagner (right). (Photo: Stephen Brewer)

John Tobey and daughter weigh in on the database structure. (Photo: Stephen Brewer)

Jake Mitchell reviewing the project plan. (Photo: Stephen Brewer)

The team listening to the ideas of Aaron Smith (rear, left) about his discoveries on how the FCC was organizing their data. (Photo: Molly McLeod)

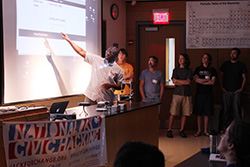

Gyepi Sam explains the team’s code to the Hack for Western Mass group. (Photo: Molly McLeod)

A team of civic-minded hackers in Western Massachusetts have built a tool to make it easier for advocates, policymakers and journalists to access documents and weigh in on telecommunications debates currently before the Federal Communications Commission.

Critical debates about telecommunications policy are archived in plain sight on the FCC’s website, but they are organized in a way that’s extremely difficult to use. Focusing on just one issue currently before the FCC — the possible creation of price caps to reduce the $1/minute cost of phone calls from prisons and jails — the team created an alternative interface for the FCC’s data. This dataset was particularly challenging because it includes the comments of about 100,000 people in approximately 7,000 pdf files, and the content ranged from well-formatted pdf files to bad scans of handwritten letters from incarcerated people.

On the first morning of Hack for Western Mass, I made a short pitch about the prison phone industry and the need to make the data more accessible. A team was formed to make this data searchable and to add some basic tag support so that journalists and others could more easily find relevant filings.

The software written by the team scrapes the FCC website for new comments, downloads the pdf files, extracts or OCRs any text, and stores that text in a database so that it can be searched. When someone finds a document of interest we then link back to the original PDF on the FCC’s website.

Our software also imports all of the meta data currently available on the FCC website (submitter, date, address etc.) and contains a tagging system so that documents can have metadata added that is useful to the people interested in a specific proceeding. For example, the FCC scans in all letters that come in to proceeding in batches and labels them all with the author “numerous”. To fix this, our system allows users to manually tag each page with two pieces of meta data: the state of origin and one of a number of different content types: whether the document or page is from telephone companies, correctional system administrators, state regulators, incarcerated people or the people who aren’t incarcerated and have to pay the high rates required by the monopolistic contracts. With these tags, users can search for keywords and filter by the existing tags. In this way, a journalist or a member of Congress could quickly find the comments of people from their particular area.

Current progress

The basic structure of the site and tool is done, but the data is still being processed. The scraper was written in Python, and a series of Python scripts then convert PDFs to the portable network map format, clean the images using OnPaper, and perform the optical character recognition using GOCR. Rails manages the database and ActiveAdmin GEM provides an interface to manage content for authenticated users. Solr is setup as well for fast search results. The scripts to download and process PDFs from the FCC website work, and thousands of documents are currently being processed. Tagging has already begun in a spreadsheet, and when the data is fully imported, we’ll set it up to add new FCC entries nightly and we’ll make an interface so that the tagging can be crowdsourced.

Future work and adaptability of the code

This project was conceived to empower people concerned about prison telephone regulation to have better access to this data. But it could be readily adapted to other issues of concern at the FCC. (And could, perhaps, inspire some improvements in how the FCC manages and publishes public comments.)

One idea we didn’t get to — and can’t until the text importation is complete — is to use our database of the text of the filings to perform various kinds of statistical analyses about who is making which arguments.

A final website with the data will be unveiled soon after the data processing is complete. The team’s code is available now on Github.

Many thanks to this weekend’s team:

- Jennie D’Ambroise

- Sam Duncan

- Jonathan Hills

- Jake Mitchell

- Sondra Morin

- Alfred Nutile

- Gyepi Sam

- Aaron Smith

- John Tobey

Stay tuned for the public launch of the FCC tool!

[…] Executive Director, Peter Wagner, was featured in a Northampton Community TV segment about the Western Mass Hackathon’s Unlocking Prison Phone Data project, along with team member Jake […]